In previous posts I have spent at lot of time addressing landscape (sic) focus stacking. That is non-macro focus stacking, where the focus point between frames needs to be moved a considerable distance; and a distance that is, relative, to macro focus stacking, difficult to calculate.

Although Magic Lantern has a focus stacking feature, this is better suited to macro-biased focus stacking, ie it can’t cope with landscapes. Hence my efforts to develop landscape focus stacking tools, such as my auto landscape bracketing script and my focus bar script.

In this post I offer an alternative approach and one that, at first, may appear a wasted effort, because we are going to use the smallest aperture of F/22.

Reading that last sentence may have forced some to look away and scoff. After all we all know the diffraction effect, which, simply put convolves an additional blur on top of the lens defocus blur. For example, we usually convolve the two dominant blurs in so-called quadrature, ie total_blur = SQRT[defocus_blur^2 + diffraction_blur^2].

However, stay with me and read the ‘bottom line’: but first a few reminders.

We all know that diffraction is only related to the aperture. That is the diffraction blur is simply equal to k.N. Where k is a constant and N is the aperture number. Thus the diffraction blur at F/16 is twice that at F/8, and at F/22 we have twice the diffraction blur that we have at F/11.

The other characteristic of diffraction blur is that it is relatively constant through the scene, unlike defocus blur that varies throughout the scene and that is zero only at the point of focus.

If we focus at a distance S, the defocus blur at infinity can be estimated from (focal_length^2)/(N*S) and the so-called hyperfocal distance can be estimated (ignoring diffraction) from (focal_length^2)/(N*CoC), where the ‘circle of confusion’ is the defocus quality term, eg, say 0.03mm (30 microns) for an on-screen image, for a 35mm sensor.

Finally, for completeness, an estimate (sic) of the near and far depths of field can be obtained from the following:

Near DoF = H*S/(H+S) for all S focus distances

Far DoF = H*S/(H-S) for S less than H, ie at S greater or equal to H the Far DoF will be infinity

From the above we can see the basics of the hyperfocal approach, ie if we focus at the hyperfocal (S = H), the Near and Far DoFs are H/2 and infinity, respectively. We can also use H to estimate the ‘loss’ of near DoF if we focus past H, ie towards infinity, ie if we focus at a distance of 2*H then the near DoF is reduced from H/2 to 2H/3; and if we focus at 3*H, the near DoF is 3H/4. In general, for x greater of equal to 1, the near DoF is x*H/(x+1).

The upside being that we gain quality in the far field, ie at a focus of 2*H the infinity defocus blur is half that at H, and at 3*H it is a third. In general, in the far field, the blur is never more than CoC/x, for x greater or equal to 1; and it reaches this at infinity.

Note, focusing between H and 3*H is a sweet spot. There is little point seeking infinity blurs less than twice the sensor’s pitch. As an example my 5D3 has a sensor pitch of about 6.3 microns, so blurs less than about 12 microns are rather worthless, as you need two pixels to create a line pair.

Finally, if we do decide to focus at infinity, as Harold Merklinger advocates, the near DoF will ‘collapse’ to H; the infinity blur will be zero (but note above) and the smallest feature we will be able to resolve in the scene will simply be the size of the aperture, ie focal_length/N.

Thus it appears we have three options if, in a single image, we wish to maximise the focus from infinity to a near point:

- Focus at the hyperfocal and realise a DoF from H/2 to infinity, but only achieve an infinity quality (CoC criterion) of ‘just acceptable'

- Focus at infinity and realise a DoF from H to infinity and achieve an infinity quality beyond (sic) that which is resolvable by the sensor

- Focus between H and x*H (x greater than 1 and less than or equal to about 3) and realise a DoF from x*H/(x+1) to infinity and achieve an infinity quality (CoC criterion) of CoC/x

But let’s say the none of the above works. That is the near DoF is still too far away and/or the far field quality is not acceptable: then and we need to resort to focus stacking.

In past posts I’ve covered the classical approach to (landscape) focus stacking: that is take several images, at different focus positions (eg using my auto bracketing script or my Focus Bar script to position the brackets), and combine these in a focus stacking programme, like Helicon Focus, Zerene Stacker or Photoshop. But in this post we will ignore this approach.

As we know, one alternative is to ‘recover’ the near DoF by simply decreasing the aperture. For example, if we first select F/11 and calculate H, then by stopping down the aperture to F/22, ie halving it, we will decrease H by 2; and, of course, the near DoF will reduce to H/4, ie half of H/2.

However, in using F/22 we have also doubled the diffraction blur and the ‘wisdom’ out there is that we should not use (35mm) apertures much beyond F/11 or F/16. But let’s ignore what others say and experiment.

The ‘new’ technique, which I’m calling F/22 Bracketing, is relatively simple and made simpler if you are using Magic Lantern: but the technique is usable without ML. It goes like this and we will assume a 24mm lens for illustration and use an infinity blur objective of 15 microns, ie half that of the standard CoC, 0.03/2:

- Set the camera to F/22 to maximise the defocus DoF;

- Estimate the (normal, ie 0.03 CoC without diffraction) hyperfocal distance, ie 24*24/(22*0.03), which is about 0.87m

- As we are seeking a high quality image, ie infinity defocus blur of 0.015, x is 2, ie 0.03/0.015) we need to focus at x*H, say, at about 1.7m

- Estimate the near DoF, from x*H/(x+1), which is 2H/3 or about 0.58m (at a blur of 0.03).

So at F/22, and maximising the far field (infinity) quality, we will capture an image with a DoF that runs from 0.58m, with a CoC criterion of 30 microns, to infinity, where the CoC criterion is 15 microns. Of course, in the far field the defocus blur will never get more than 0.015, but in the near field, objects closer than 0.58m will suffer defocus bluring beyond 30 microns.

BTW if we were using a wide angle 12mm lens the above would come out at:

- H = 0.22m (@ CoC of 0.03)

- Infinity defocus blur = CoC/2 = 0.015

- Focus at 2*H = 0.44m to achieve the 0.015 infinity blur, ie high quality focus

- Near DoF = 2*H/3 = 0.14m (@ a defocus blur of 0.03)

But we still haven’t dealt with that diffraction softening.

The key aspect of the technique is to ‘recover’ the diffraction blur by using a super-resolution approach, augmented by the power of Photoshop. That is simply take additional images around the point of focus, where each image is ‘pixel displaced’ relative to the others.

Although we are seeing some cameras with built-in sensor shifting, most cameras do not have this feature. A poor man’s version of this is to either physically move the image, ie if you are hand-holding, or, as we are likely to be on a tripod, and to account for long shutter times, slightly shift the focus between images.

If you have Magic Lantern you can simply go to the Focus menu and Focus Stacking. Select the number of images in front and behind the focus point, say, 3 for a total number of images of 7, and the step size to the smallest, ie 1. That’s it: ML does the rest.

The final stage is to post process in Photoshop by brining all the images into a layered file, auto align the layers, create a smart object out of the layers and use the median stacking Smart Filter. Finally, use the Smart Sharpen in Lens Blur mode to fine tune the data.

So is it all worth it?

Maybe, maybe not: but I had fun experimenting with the idea and trying it out.

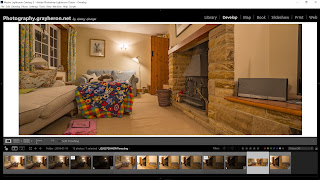

By the way, here is a test shot I just did, with a zoomed in (2:1) screen shot of some detail. On the left is the image at the point of focus and on the right the ‘F/22 focus stacked’ one. I used three images either side of the point of focus, as taken by the ML focus stacking feature, and processed the resultant 7 images as above. There is certainly a quality difference, ie the F/22 approach is clearer/sharper.

Bottom line: I think there maybe something in this F/22 Bracketing technique, and I can see it may have value for, say, indoor architectural shooting, ie not many trees blowing in the wind. I’ll carry on experimenting and report my findings in future posts.